|

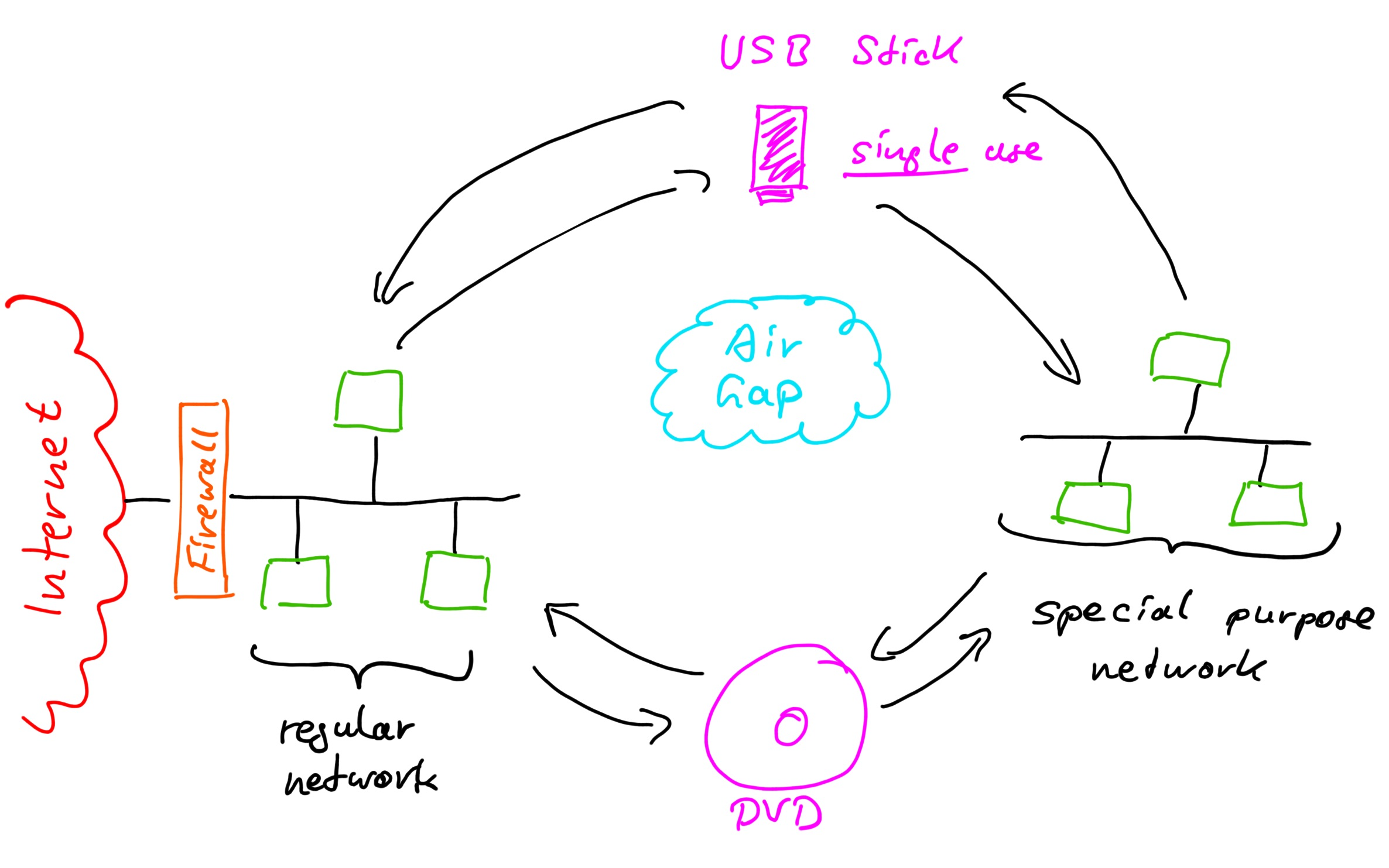

Download the followings with AWS CLI to your local computer (given that the AWS keys you got through step #1 are properly set in your environment as explained above) #find the whl file for your version at $ wget $ aws s3 cp -region us-east-2 s3:///public/jars/spark-nlp-assembly-3.0.2.jar ~/spark-nlp-3.0.2.jar $ aws s3 cp -region us-east-2 s3:///$jsl_secret/spark-nlp-jsl-3.0.2.jar ~/spark-nlp-jsl-3.0.2.jar $ aws s3 cp -region us-east-2 s3:///$jsl_secret/spark-nlp-jsl/spark_nlp_jsl-3.0.2-p圓-none-any.whl ~/spark_nlp_jsl-3.0.2-p圓-none-any.whl $ aws s3 cp -region us-east-2 s3:///$jsl_ocr_secret/jars/spark-ocr-assembly-3.0.0-spark30.jar ~/spark-ocr-assembly-3.0.0-spark30.jar $ aws s3 cp -region us-east-2 s3:///$jsl_ocr_secret/spark-ocr/spark_ocr-3.0.0.spark30-p圓-none-any.whl ~/spark_ocr-3.0.0.spark30-p圓-none-any.whl $ wget -q įor more information, see the official installation links here and here. $ aws configure AWS Access Key ID : AKIAIOSFODNN7EXAMPLE AWS Secret Access Key : wJalXUtnFEMI/K7MENG/bPxRfiCYEXAMPLEKEYĤ. Then configure your AWS credentials as follows (set the AWS keys you got via free trial):.Install AWS CLI to your local computer following the steps here for Linux and here for MacOS.In order to get trial keys for Spark NLP for Healthcare, fill the form at and you will get your keys to your email in a few minutes.Here are the steps on a machine having an internet connection: Assuming that you already have Python and Jdk 8 installed properly in your secure network, What if we have no internet connection and have to do all these in air-gapped networks? We can download all the relevant packages outside of our network and copy them over with a mounted disk. config("spark.jars", ""+secret+"/spark-nlp-jsl- 3.0.2.jar").getOrCreate() Offline modeĪs you can see, the relevant jar packages are pulled from maven and pypi through internet. from pyspark.sql import SparkSession spark = SparkSession.builder \. This start() the function basically runs the following code block under the hood and prepares the Spark session with relevant packages that you would need to work with Spark NLP. If you are using the licensed version, then you can do this: After installing the packages, starting the Spark session with Spark NLP is as easy as this: The installation steps would require some other steps depending on the OS you have and you can find more information at this article.

(* all of the steps below are tested on Linux based operation systems, namely Ubuntu, RHEL, and CentOS)Īt first, we install the Spark NLP libraries as follows: $ pip install spark-nlp=3.0.2 $ python -m pip install -upgrade spark-nlp-jsl=3.0.2 -user -extra-index-url $secret In such scenarios, the alternative is to create an “AI cleanroom” - an isolated, hardened, air-gap environment where the work happens.

This may happen because the nature of the projects does not allow full de-identification in advance. It has an active community and rich resources that you can find more information and code samples.ĭata Science projects in high-compliance industries, like healthcare and life science, often require processing Protected Health Information (PHI). Downloaded more than 5 million times and experiencing 16x growth for the last 16 months, Spark NLP is used by 54% of healthcare organizations as the world’s most widely used NLP library in the enterprise. It supports nearly all the NLP tasks and modules that can be used seamlessly in a cluster. Spark NLP comes with 1100+ pretrained pipelines and models in more than 192+ languages.

It provides simple, performant & accurate NLP annotations for machine learning pipelines that can scale easily in a distributed environment. Spark NLP is a Natural Language Processing (NLP) library built on top of Apache Spark ML.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed